spotmarket – 0.4

Posted: 2016-03-18 Filed under: python, spotmarket | Tags: amarr, dodixie, fountain, jita, PLEX, rens Leave a commentOverview

Whew, this was a big step in the right direction. I added a lot of sanity (keep everything in UTC), usability, and cleanup to the existing design. We’ve got moongoo, the beginnings of indexes, and CREST killmail data now flowing in to the growing dataset. The backend was improved by migrating to PostgreSQL 9.5.1 and I also cleaned up the install directions to try to make it easier.

[bug] Change timestamp for zkillboard and markethistory consumer to use UTC for mental sanity

[enhancement] Add 404 error page

[enhancement] Change to postgres 9.5.1 to support jsonb

[enhancement] Parameterize graph functions

[enhancement] Change date format in graphs to ISO for mental sanity

[enhancement] Standardize table formatting

[enhancement] Supervisor to make Flask web service persistent

[enhancement] Add jsonb index on data.killmails for typeID and solarSystemID

[enhancement] Add paging to zKillboard consumer

[enhancement] Add check to resume from last recorded page for zKillboard consumer

[enhancement] Add basic exception handling to zKillboard consumer

[new] Creation of ship index report

CREST Verified Moon Minerals

It is no secret that I have an affinity for finding accurate moon data. In a previous post, I’ve gone so far as to chart the regional density of each moon mineral, showing that there were built in skews as to where each type was placed for each class (R8, R16, etc).

In the Rubicon expansion, CCP introduced siphon units, which can be anchored near a POS to slowly leech items from within the shields. When an extractor is destroyed the killmail will show what moon mineral was in it. This, combined with the x,y,z location data that started to be exposed after the Parallax expansion, can be used to locate the nearest celestial object to give us a verified report of what moon mineral is contained in the object.

I gathered some examples for anyalisis, and over a long flight, I started to put some code together to use killmail x,y,z coordinates to parse CREST killmails. After implementation and testing, it has proven to be accurate so I’ve included the feature in this release. There is a manual step to get the moon mineral data into a table where it is used in the web frontend; I wanted to keep them separate to isolate the two datasets.

PostgreSQL Upgrade to 9.5.1

Rather than writing a parser for CREST killmails, I decided to store the JSON itself for simplicity. Developing on 9.3 with the json datatype proved to be a path that I did not want to go down. 9.3 is not the prefered version and lacked support for a fancy datatype inherit to 9.4+ and above. The following blog post also convinced me to migrate.

The proof is in the numbers so here’s a side-by-side comparison of the same query on the same dataset with only the datatype being different. The two virtual machines also had access to the same amount of CPU and RAM to control the results.

This query was proof enough that I made the right choice. Later that night while checking my logging table, I also noticed an improvement in insert speed.

9.3 (json) [kills] insert 2982 @ 93.81 rec/sec 9.5.1 (jsonb) [kills] insert 2850 @ 124.44 rec/sec

Not bad for about 45 minutes of testing and correcting install instructions.

0.5 Release

What’s up for the next release? Check out the TODO.md for a full list of items slated for each release. The main focus is going to be putting more control in the web front end, letting you enable/disable import items, add item to the market/zKillboard watch list, etc.

spotmarket – pivot and resample

Posted: 2016-01-29 Filed under: market, python, spotmarket | Tags: blood raiders, cloud ring, delve, fade, fountain, outer ring, period basis, querious, serpentis, syndicate Leave a commentOverview

I published v0.2, which includes a bunch of hacked together proof of concept code. This week I dove into the pandas library, focusing on the data manipulation tools that are inherit to pandas. This post covers some of the highlights and demonstrates the initial charting that I’ve been producing.

More to come on what I plan to do with the data; for now those details have to be a trade secret.

Data Frame

The crux of pandas is the dataframe and this week I discoverd that you can load your query results right into a dataframe and not have to write a parser. Notice in the below code that I run the query and place the results into a dataframe with a ‘fetchall()’. This really saves me a lot of headaches when passing around chunks of data and makes it easy to write functions to combine data with overlapping values. Return the dataframe and do more ::work::.

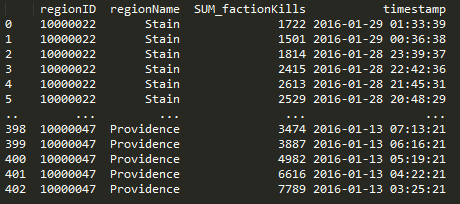

Here’s a query that I use to get NPC kills by region when given a regionID.

def getnpckills_byregion(regionID):

conn = psycopg2.connect(conn_string)

cursor = conn.cursor()

sql = """SELECT

"mapSolarSystems"."regionID",

"mapRegions"."regionName",

SUM(mapkills."factionKills") as SUM_factionKills,

mapkills."timestamp"

FROM

data.mapkills,

public."mapRegions",

public."mapSolarSystems"

WHERE

"mapRegions"."regionID" = "mapSolarSystems"."regionID" AND

"mapSolarSystems"."solarSystemID" = mapkills."solarSystemID" AND

public."mapSolarSystems"."regionID" = %s

GROUP BY mapkills."timestamp", public."mapSolarSystems"."regionID", "mapRegions"."regionName"

ORDER BY timestamp DESC

"""

data = (regionID, )

cursor.execute(sql, data)

df = pd.DataFrame(cursor.fetchall(),columns=['regionID', 'regionName', 'SUM_factionKills', 'timestamp'])

cursor.close()

return df

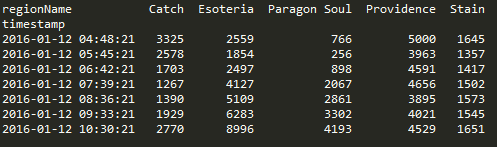

Pivot

Doing a pivot in pandas is amazingly elegant and you can perform the operation in one statement.

df.pivot(index='timestamp', columns='regionName', values='SUM_factionKills')

Resample

Another powerful tool in the library are the resampling options. I’m still trying adjusting the parameters to find a balance on data granularity.

The below graphs show NPC kills over all regions over time. One graph has no resampling while the other has values that I feel display a daily value, normalizing the peaks and valleys that occur due to server downtime and differing activity rates due to timezone.

conversion = "12H" v_window = 8 v_minperiods = 1 pd.rolling_mean(df.resample(conversion, fill_method='bfill'), window=v_window, min_periods=v_minperiods)

Results

Ratting activity in Blood Raiders regions:

Ratting activity in Serpentis regions:

More to come. Find me on #k162space on tweetfleet.slack.com.

Relocating Capital Building Operations

Posted: 2013-12-18 Filed under: industry, market, nullsec, ships | Tags: archon, chimera, delve, fountain, G-TT5V, moros, naglfar, nidhoggur, revelation, thanatos Leave a commentWith the stabilization of the Fountain and Delve regions over the past few months, the amount of sales we have seen in our building system have decreased. Raath made a command decision to leave the region in search for greener pastures.

Operation Performance

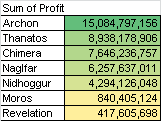

Our time in G-TT5V spanned 385 days where we built 162 capital hulls to sell on the local market. Our sales totaled 221.4 B ISK with a total profit coming in at 43.3 B. Performance metrics show a margin of 4.1%, an average of 3.4 B per month in profit, and a velocity of 5.06 hulls moved per week.

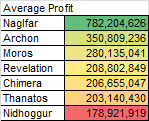

Top Performers

Given the balance changes to the Naglfar hull that came in the Odyssey update, we have seen a very strong demand the the hull; it is not a surprise that it has been a top performer. If you want to mass build, pick the Archon or Thanatos.

Capital Runs 1-109

Posted: 2013-07-06 Filed under: market, ships | Tags: archon, chimera, fountain, moros, naglfar, nidhoggur, revelation, thanatos 5 CommentsOverview

Project has been running now for 239.5 days and has generated 33.2 B in profit averaging 304.9 M/hull with a sale every 2.19 days.

War is Good for Business

I am positioned to sell to anyone due to my building location as it is not a sovereign station. There was a noticeable uptick in armament when the Fountain campaign was announced in late April of 2013.

Large-scale Nullsec Industry

Posted: 2013-01-16 Filed under: industry, nullsec, ships | Tags: 6VDT-H, fountain, ogaria, solitude 4 CommentsOverview

Nullsec industry does exist, but not as you would expect. There are significant more logistical hurdles involved, which makes it a strange incarnation when compared to industry in the contiguous Highsec world.

While reading over the CSM7 Winter 2012 Summit Minutes, I felt the desire to expose my method of industry to help the CSM and CCP better understand how large-scale people are working with the current design of Nullsec.

For reference the Nullsec industry discussion that sparked this post starts on page 45, second to last paragraph of the CSM Minutes.

Heavy Experience

I’ve built over 663 Battleships, 1,270 Battlecruisers, and 32 Carriers.

Since I hold a disdain for the UI involved in the Invention process, I’ve done limited amounts of Tech2 Invention. This means that I have purchased my items mainly in bulk from manufactures. My report shows that I have moved around 28,000 modules.

The amount of ISK I’ve touched is quite amazing, coming in at 836 B ISK.

In CCP’s new terminology I’m called an enabler.

Seagull: Enablers are the people who make the logistics for these large-scale things actually work. They are people who run mad spreadsheets to organize production lines for war efforts… … – Page 5 of the CSM Minutes

All my efforts are to supply hordes of nullsec warriors with tools to win battles — to make sure that our troops on deployment have Autocannons and Hulls that can fit the doctrine.

Methodology

Here are my C-level objectives to keep in mind as I outline my process for building in Nullsec:

- Minimize risk by securing assets.

- Minimize time sinks so that I make more for less play time.

- Maximize market velocity so the ISK flows.

Efficient Logistics

Wait, people mine in Nullsec!? Yes, over the past three years of building I have found small niche groups that do mine locally in null. I’ve even developed a few agreements to source discounted materials in bulk when available.

I don’t want to disprove that people mine and do Tech1/2 industry in sovereign nullsec stations, but rather to show that there are paths of least resistance to getting better [faster] results.

Despite local groups mining, sourcing your materials in from Highsec is far more efficient that waiting for miners to produce results while periodically dodging AFK neutrals. Buy in bulk in Highsec, compressing, jump in, uncompress, and building is quick. Elise (@EliseRandolph) clearly understands this process as noted on page 45 in the fourth paragraph of the CSM Minutes.

Bringing in assets from Highsec reduces my timesink as I can buy in bulk from a large pool of suppliers.

The POS System

This may be a scary point to accept, but the easiest solution to making sure your industrial assets are secure is to not use the POS system.

With a deployed asset out in space, you are vulnerable to attack. Your ships, minerals, and datacores can all be locked if the tower is put into reinforced. Rather than use a user-deployed structure, I have opted to use NPC pockets withing large alliances or a Lowsec system that is within close jump range to the alliance main staging system. Additionally I detest the process of fueling, deploying, anchoring, and onlining structures so I have gravitated away from the POS system.

Not using a POS in space allows me to secure my assets inside a NPC station. There is no risk of my assets being locked in a station when sovereignty flips. For me, there is no clear need for an industrialist to setup a POS network, maintain fuel, and defend assets when there are logistically easy options available.

Side conversation: I’ve heard so many ramblings about reducing jump range to combat this logistical option. If jump ranges are reduced, I will create another cyno alt and place him in-line of my path efficiently making the range adjustment pointless. Two jumps now rather than one? Bleh.

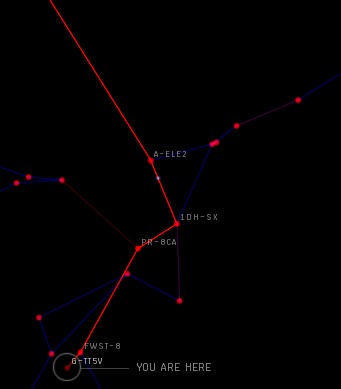

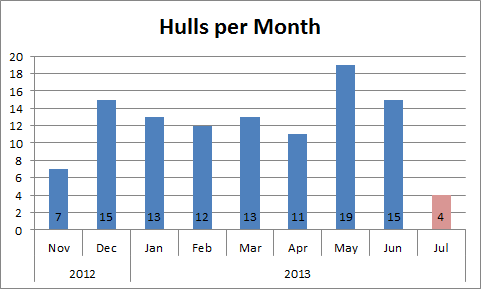

System Selection

Large hubs are not hard to find in Nullsec. If you go to Dotlan, select a region, and filter by jumps over 24 hours, you can easily see where the traffic is located.

For TEST’s old capital region of Fountain, you can clearly see that 6VDT-H is the focal point of traffic. The main system of 6VDT is in within one jump of Ogaria, a factory system in Solitude. Here you can peacefully uncompress minerals, construct, and have easy access to Solitude research slots in a Highsec island.

Selecting a place with a large amount of Nullsec people enables you to have high market velocity so that you can transform your goods back into ISK, which can then go right back into your construction projects. Money makes money.

So, Game Mechanics

I don’t claim to be a game designer so I can’t offer a 1-2-3 punch-list of what to change in order to reach an ideal state of Nullsec industry. What I want to show is that given the current system, one person has the ability to produce large amounts of change on the industry landscape in Nullsec without the need for any locally sourced goods.

My sentiments for Nullsec industry change are very basic. There needs to be a reason for an enabler like me to setup shop locally. Conversely there needs to be a reason for the instigators to defend what I have build.